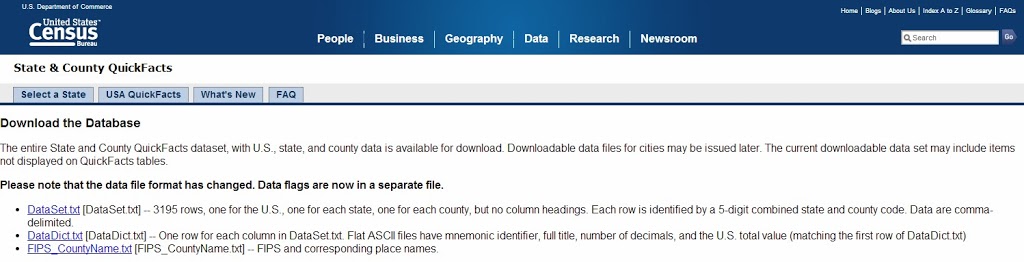

A Day of Data Warehousing…and Pie!

What better way to celebrate Pi day than by learning data warehousing fundamentals from two experts in the field? I can’t think of one!

Audrey Hammonds (B | T) and Julie Smith (B | T) of DataChix.com very generously decided to host a standalone day long class on the subject of Data Warehousing. As if getting to participate in a day of training for such a low price weren’t great enough, on top of all that they dedicated all proceeds from the class to The Cloverleaf School.

The event was modeled after a SQL Saturday pre-con, however, it was a standalone event not attached to a SQL Saturday. Even without having a SQL Saturday to bring in the crowds, the attendance was great and far better than I expected! Just about the right balance of having enough people to form a true class, but not too many people that you’re lost in a sea of faces.

The logistics of the event were very well organized. The venue (the American Legion Post) was easy to find with easy parking. Upon arriving, check-in was quick and easy and a nice breakfast was available. The volunteers from The Cloverleaf School did a great job coordinating everything.

Audrey and Julie basically worked the scenario of a pie business with no infrastructure moving through the process of growth and implementing a data warehouse with regular ETL. They essentially divided the material into two sections: database concepts and data warehouse design (Audrey) and ETL/SSIS (Julie). This format worked out well as a representation of what happens with job roles in the real world and they had great and entertaining banter between the two of them. I particularly enjoyed Audrey’s exercise in data modeling.

For lunch, what would be more appropriate on Pi Day (3/14) than of course, Pizza Pie and various dessert pies? They were a hit!

After lunch, we took a moment to pose for a group photo by the tank, because hey, there’s a tank, why not pose for a group photo on it?

All in all, I felt the class was a great experience and I was very happy with the time spent. Not only was it for a great cause, but I got to meet some new people in the community and of course learned a lot. While I’ve learned about the various components in a full BI system, this is one of the first resources that has done a good job of tying them together from start to finish and painting a complete picture.

As an organization that currently runs the majority of business functions from an OLTP database with SSRS pulling data directly from the OLTP database, I am very interested in learning how to properly design and implement a data warehouse, ETL data into it, and leverage the data in interesting ways with SSAS and the variety of other tools that can illuminate an OLAP data source (SSRS, PerformancePoint, Power View, etc). Stay tuned for more as I kick off that adventure!

Recently, I had the need to analyze phone call data to answer questions such as how many phone calls were received in a given day and how frequently voicemail answered instead of a live person. In this scenario, I was fortunate enough to have a fairly accessible phone system to work with — a Cisco UC520. While this guide is specific to working with a Cisco UC520 device, most Cisco phone systems (the UC series or anything utilizing CUE/CME) should be pretty comparable.

Recently, I had the need to analyze phone call data to answer questions such as how many phone calls were received in a given day and how frequently voicemail answered instead of a live person. In this scenario, I was fortunate enough to have a fairly accessible phone system to work with — a Cisco UC520. While this guide is specific to working with a Cisco UC520 device, most Cisco phone systems (the UC series or anything utilizing CUE/CME) should be pretty comparable.